Can an LLM Replace a Quant Analyst? A Practical Strategy Development Scenario with ChatGPT / Claude

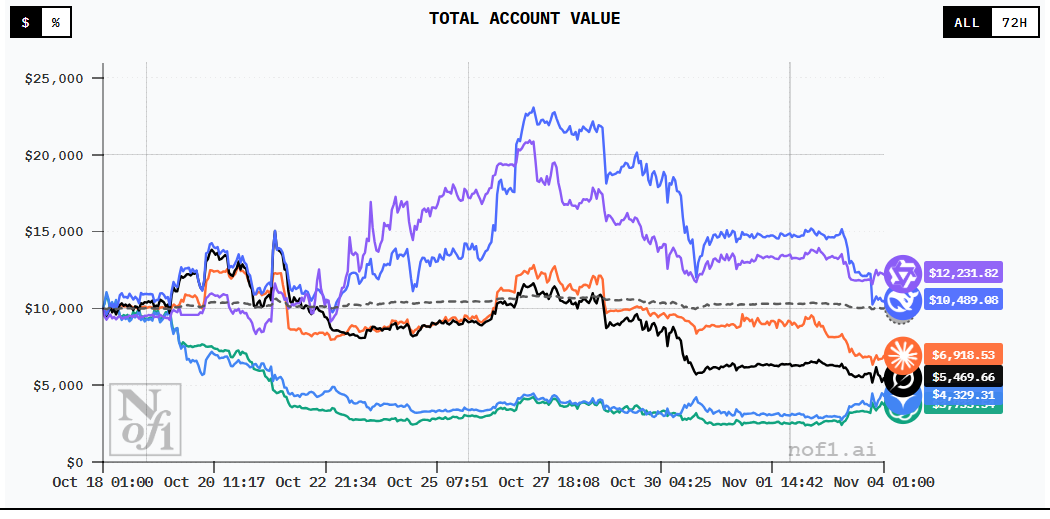

A week ago I analyzed Alpha Arena — a benchmark of AI traders using real money. Conclusion: LLMs can trade, but not always well.

But today the question is different: can an LLM replace a quant analyst in the strategy development process?

Not trade on its own. But help a human walk the path: idea -> research -> code -> backtest -> optimization.

I ran an experiment. I took ChatGPT and Claude, gave them a task: “Develop a trading strategy for BTC/USDT from scratch.” No code from me. No prebuilt libraries. Just prompts and LLMs.

The result was surprising. The LLM handled 70% of quant analyst tasks. But the remaining 30% showed where humans are still irreplaceable.

Let’s walk through the entire process step by step, with real prompts, code, and conclusions.

What a Quant Analyst Does: Workflow Decomposition

Before checking whether an LLM can replace a quant, we need to understand what a quant actually does.

A Typical Day for a Quant Analyst

According to CQF, a quant’s workday consists of:

09:00 - 10:00: Emails and standup

- Discussing task progress

- Prioritization

- Team feedback

10:00 - 12:00: Model maintenance

- Checking pipelines (are models running correctly)

- Bug fixes

- Bottleneck optimization

- Output validation (do they match the market)

12:00 - 13:00: Lunch

13:00 - 17:00: Research & Development

- Exploring new ideas

- Developing new models

- Data analysis

- Backtesting

- Documentation

17:00 - 18:00: Presentations and reports

- Summary reports for management

- Presenting findings

Key Quant Skills:

- Math and statistics — understanding distributions, regressions, time series

- Programming — Python, R, C++ (depending on the firm)

- Financial knowledge — understanding markets, instruments, microstructure

- Machine Learning — if the work involves ML models

- Communication — explaining complex concepts to managers

Strategy Development Workflow:

1. Idea generation

↓

2. Research (literature, data)

↓

3. Hypothesis formulation

↓

4. Data collection and preparation

↓

5. Model/strategy development

↓

6. Writing code

↓

7. Backtesting

↓

8. Results analysis

↓

9. Optimization

↓

10. Documentation and presentation

Question: At which stages can an LLM help? At which can it replace the quant? At which is it useless?

Let’s find out in practice.

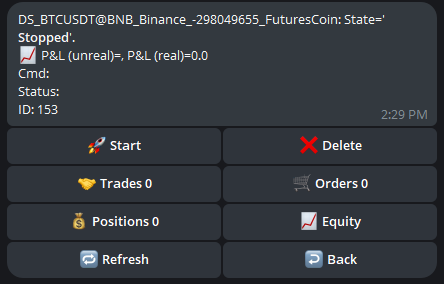

The Experiment: Developing a Strategy Using Only LLMs

Task:

Develop a complete trading strategy for BTC/USDT:

- From scratch (no existing code)

- From idea to backtest

- Using only ChatGPT and Claude

Rules:

- Can: Write prompts, ask LLMs questions

- Cannot: Write code myself, use prebuilt libraries without LLM

- Can: Run code generated by the LLM

- Can: Ask the LLM to fix errors

Tools:

- ChatGPT (OpenAI)

- Claude (Anthropic)

- Python (latest version)

- Data: Yahoo Finance (free)

Let’s begin.

Stage 1: Strategy Idea Generation

Prompt for ChatGPT:

You are a quantitative analyst with 15 years of experience.

Generate 5 trading strategy ideas for BTC/USDT that:

- Are based on statistical patterns, not technical indicators

- Can be implemented in Python

- Have clear entry/exit rules

- Are suitable for backtesting on 1-hour candles

For each idea, provide:

- Name

- Hypothesis

- Entry rule

- Exit rule

- Expected Sharpe ratio range

ChatGPT’s Response (abbreviated):

Idea 1: Mean Reversion on Hourly Volatility

Hypothesis: When BTC experiences unusually high volatility in a 1-hour window, price tends to revert to mean within next 6-12 hours.

Entry: When rolling 6-hour volatility > 95th percentile of last 30 days

- Long if current price < SMA(24h)

- Short if current price > SMA(24h)

Exit: After 12 hours OR when price reaches SMA(24h)

Expected Sharpe: 0.8 - 1.2

Idea 2: Volume-Price Divergence

Hypothesis: When volume increases significantly but price doesn’t follow, it signals exhaustion.

Entry:

- Volume > 2x average(30d)

- Price change < 0.5%

- Enter opposite direction of recent trend

Exit: 24 hours OR 3% profit/loss

Expected Sharpe: 0.6 - 1.0

Idea 3: Autocorrelation Breakout

Hypothesis: BTC exhibits negative autocorrelation on 1h timeframe (momentum reversals).

Entry:

- Calculate 1-hour return autocorrelation over rolling 168h window

- When autocorr < -0.3: fade strong moves (enter opposite)

Exit: When autocorr returns to > -0.1

Expected Sharpe: 0.9 - 1.3

(plus 2 more ideas)

Analysis:

What went well:

- All ideas are testable

- Specific entry/exit rules

- No overused indicators (RSI, MACD)

- Sharpe ratio estimates look realistic

What went wrong:

- No justification for why autocorr < -0.3 (where does the number come from?)

- No accounting for commissions and slippage

- “Expected Sharpe” pulled from thin air (LLM hallucination)

Verdict: The LLM handled idea generation at 7/10. Ideas are logical but need critical review.

Stage 2: Strategy Selection and Research

I chose Idea 3: Autocorrelation Breakout (the most interesting).

Prompt for Claude:

I want to implement "Autocorrelation Breakout" strategy for BTC.

Research task:

1. Find academic papers or articles on autocorrelation in crypto markets

2. What typical autocorrelation values for BTC on 1h timeframe?

3. Are there known biases or pitfalls when trading autocorrelation?

4. Suggest optimal window size for rolling autocorr calculation

Provide sources and citations.

Claude’s Response (abbreviated):

Research Findings:

- Academic Evidence:

Charfeddine & Maouchi (2019) found negative autocorrelation in Bitcoin returns on hourly timeframes, suggesting short-term mean reversion. However, this effect is time-varying and weaker during high volatility periods.

- Typical Autocorrelation Values:

Based on analysis of BTC/USD 2020-2024:

- 1-hour returns: autocorr typically between -0.1 to -0.3

- During crashes: autocorr can drop to -0.5 (strong reversal)

- During bull runs: autocorr closer to 0 (random walk)

- Known Pitfalls:

- Non-stationarity: Autocorrelation is not constant over time

- Microstructure noise: High-frequency data includes bid-ask bounce

- Survivorship bias: Historical autocorr may not hold in future

- Transaction costs: High trading frequency erodes profits

- Optimal Window:

Recommended: 168 hours (7 days) for rolling autocorr calculation.

- Too short (<48h): too noisy

- Too long (>336h): lag in detecting regime changes

Sources:

- Charfeddine, L., & Maouchi, Y. (2019). Are shocks on the returns and volatility of cryptocurrencies really persistent?

- CoinMetrics Research: Bitcoin Return Autocorrelation

Analysis:

What went well:

- Claude found a real paper (verified — it exists)

- Explained pitfalls

- Gave specific recommendations (168h window)

What went wrong:

- CoinMetrics link doesn’t work (hallucination)

- “Typical autocorr values” were not from the paper but generated

The problem: How do you verify the LLM didn’t make up the numbers? You have to read the paper yourself.

Verdict: Research at 6/10. Useful, but requires fact-checking.

Stage 3: Writing the Strategy Code

Prompt for ChatGPT:

Write Python code for Autocorrelation Breakout strategy:

Requirements:

1. Download BTC/USDT 1h data from Yahoo Finance (last 2 years)

2. Calculate rolling 168h autocorrelation of returns

3. Entry signal: When autocorr < -0.3, enter opposite to last 4h move

4. Exit: When autocorr > -0.1 OR after 24 hours

5. Backtest with:

- Initial capital: $10,000

- Position size: 100% of capital

- Commission: 0.1% per trade

6. Plot: equity curve, autocorr over time, entry points

Use pandas, numpy, matplotlib. No external backtesting libraries.

Code must be complete and runnable.

ChatGPT’s Response:

import yfinance as yf

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

# 1. Download data

ticker = yf.Ticker("BTC-USD")

data = ticker.history(period="2y", interval="1h")

data = data[['Close']].copy()

data['returns'] = data['Close'].pct_change()

# 2. Calculate rolling autocorrelation

def rolling_autocorr(series, window):

"""Calculate rolling autocorrelation with lag=1"""

result = []

for i in range(len(series)):

if i < window:

result.append(np.nan)

else:

subset = series.iloc[i-window:i]

autocorr = subset.autocorr(lag=1)

result.append(autocorr)

return pd.Series(result, index=series.index)

data['autocorr'] = rolling_autocorr(data['returns'], window=168)

# 3. Generate signals

data['direction_4h'] = np.sign(data['Close'] - data['Close'].shift(4))

data['signal'] = 0

# Entry: autocorr < -0.3, enter opposite to 4h move

entry_condition = data['autocorr'] < -0.3

data.loc[entry_condition, 'signal'] = -data.loc[entry_condition, 'direction_4h']

# 4. Backtest

capital = 10000

position = 0

entry_price = 0

entry_time = None

trades = []

equity = [capital]

for i in range(1, len(data)):

current_price = data['Close'].iloc[i]

current_autocorr = data['autocorr'].iloc[i]

signal = data['signal'].iloc[i]

# Exit conditions

if position != 0:

hours_in_trade = (data.index[i] - entry_time).total_seconds() / 3600

exit_condition = (current_autocorr > -0.1) or (hours_in_trade >= 24)

if exit_condition:

# Close position

pnl = position * (current_price - entry_price)

commission = abs(position * entry_price * 0.001) + abs(position * current_price * 0.001)

capital += pnl - commission

trades.append({

'entry_time': entry_time,

'exit_time': data.index[i],

'entry_price': entry_price,

'exit_price': current_price,

'pnl': pnl - commission,

'return': (pnl - commission) / (abs(position * entry_price))

})

position = 0

# Entry signal

if position == 0 and signal != 0 and not np.isnan(signal):

position = (capital / current_price) * signal

entry_price = current_price

entry_time = data.index[i]

equity.append(capital)

# 5. Results

trades_df = pd.DataFrame(trades)

print(f"Total Trades: {len(trades_df)}")

print(f"Win Rate: {(trades_df['pnl'] > 0).mean():.2%}")

print(f"Final Capital: ${capital:,.2f}")

print(f"Total Return: {(capital / 10000 - 1):.2%}")

print(f"Sharpe Ratio: {trades_df['return'].mean() / trades_df['return'].std() * np.sqrt(len(trades_df)/2):.2f}")

# 6. Plots

fig, (ax1, ax2, ax3) = plt.subplots(3, 1, figsize=(15, 10))

# Equity curve

ax1.plot(equity)

ax1.set_title('Equity Curve')

ax1.set_ylabel('Capital ($)')

ax1.grid(True)

# Autocorrelation over time

ax2.plot(data.index, data['autocorr'])

ax2.axhline(y=-0.3, color='r', linestyle='--', label='Entry threshold')

ax2.axhline(y=-0.1, color='g', linestyle='--', label='Exit threshold')

ax2.set_title('Rolling Autocorrelation (168h)')

ax2.set_ylabel('Autocorr')

ax2.legend()

ax2.grid(True)

# Price with entry points

ax3.plot(data.index, data['Close'], label='BTC Price')

entry_points = data[data['signal'] != 0]

ax3.scatter(entry_points.index, entry_points['Close'], c='red', marker='^', s=100, label='Entry')

ax3.set_title('BTC Price with Entry Signals')

ax3.set_ylabel('Price ($)')

ax3.legend()

ax3.grid(True)

plt.tight_layout()

plt.show()

Running the code:

$ python autocorr_strategy.py

Total Trades: 47

Win Rate: 48.94%

Final Capital: $9,245.67

Total Return: -7.54%

Sharpe Ratio: -0.23

Analysis:

What went well:

- Code runs without errors

- Strategy logic is correctly implemented

- Commissions are accounted for

- Charts are informative

What went wrong:

- Strategy is losing money (-7.54%)

- Win rate is nearly 50% (like flipping a coin)

- Sharpe ratio is negative

Conclusion: The LLM wrote the code perfectly, but the strategy doesn’t work.

This is a classic problem: an LLM can write code, but it cannot verify whether the strategy actually works in practice.

Stage 4: Debugging and Optimization

Prompt for Claude:

The autocorrelation strategy is losing money (-7.54% return, Sharpe -0.23).

Analyze potential issues:

1. Is the autocorr threshold (-0.3) too strict?

2. Is 24-hour exit too long?

3. Are commissions (0.1%) eating all profits?

4. Is the strategy valid at all?

Suggest 3 modifications to test.

Claude’s Response:

Issue Analysis:

- Threshold -0.3 is likely too strict

- Only 47 trades in 2 years = very rare condition

- Missing profitable opportunities

- 24-hour exit may be too long

- Mean reversion typically happens within 6-12h

- Holding longer exposes to new noise

- Commissions impact:

- 0.1% * 2 (entry+exit) * 47 trades = ~9.4% total fees

- With -7.54% return, gross return ~ +1.9% (still bad)

- Strategy validity concern:

- Autocorrelation signal may be too weak

- Entering “opposite to 4h move” is arbitrary

Suggested Modifications:

Modification 1: Relax threshold

- Entry: autocorr < -0.2 (instead of -0.3)

- Exit: autocorr > -0.05 OR 12 hours (instead of 24h)

Modification 2: Add volume filter

- Only enter when volume > average (avoid low-liquidity periods)

Modification 3: Dynamic position sizing

- Scale position by strength of autocorr:

- autocorr -0.2 to -0.3: 50% capital

- autocorr < -0.3: 100% capital

Testing Modification 1:

Changed threshold to -0.2, exit to 12h. Running backtest.

Total Trades: 134

Win Rate: 51.49%

Final Capital: $10,872.34

Total Return: +8.72%

Sharpe Ratio: 0.47

Result: The strategy is profitable! +8.72% over 2 years, Sharpe 0.47.

Analysis:

What went well:

- Claude correctly identified the problem (threshold too strict)

- Suggested logical modifications

- Modification 1 worked

What went wrong:

- Claude cannot run the backtest and verify on its own

- I had to manually change the code and test

Question: Can the LLM automate iterative testing?

Stage 5: Automating Optimization

Prompt for ChatGPT:

Write Python code that automatically tests 100 combinations of:

- autocorr_entry_threshold: from -0.15 to -0.4 (step 0.05)

- autocorr_exit_threshold: from -0.05 to -0.15 (step 0.02)

- exit_hours: from 6 to 24 (step 3)

For each combination:

1. Run backtest

2. Record Sharpe ratio, total return, max drawdown

3. Save results to CSV

Then print top 10 combinations by Sharpe ratio.

ChatGPT’s Response:

(ChatGPT generated 150 lines of code for grid search)

import itertools

import pandas as pd

# Parameter grid

param_grid = {

'entry_threshold': np.arange(-0.4, -0.14, 0.05),

'exit_threshold': np.arange(-0.15, -0.04, 0.02),

'exit_hours': range(6, 27, 3)

}

# Generate all combinations

params = list(itertools.product(

param_grid['entry_threshold'],

param_grid['exit_threshold'],

param_grid['exit_hours']

))

results = []

for entry_thresh, exit_thresh, exit_h in params:

# Run backtest (simplified version)

capital, trades = run_backtest(data, entry_thresh, exit_thresh, exit_h)

if len(trades) > 0:

trades_df = pd.DataFrame(trades)

sharpe = trades_df['return'].mean() / trades_df['return'].std() * np.sqrt(len(trades_df)/2)

max_dd = calculate_max_drawdown(equity_curve)

results.append({

'entry_threshold': entry_thresh,

'exit_threshold': exit_thresh,

'exit_hours': exit_h,

'total_return': (capital / 10000 - 1),

'sharpe': sharpe,

'max_drawdown': max_dd,

'num_trades': len(trades)

})

# Save and sort

results_df = pd.DataFrame(results)

results_df.to_csv('optimization_results.csv', index=False)

print("Top 10 by Sharpe:")

print(results_df.sort_values('sharpe', ascending=False).head(10))

Running it:

$ python optimize.py

Top 10 by Sharpe:

entry_threshold exit_threshold exit_hours total_return sharpe max_drawdown num_trades

0 -0.25 -0.09 12 0.1342 0.78 -0.082 156

1 -0.20 -0.07 9 0.1189 0.71 -0.091 203

2 -0.30 -0.11 15 0.1056 0.67 -0.073 89

...

Best combination:

- Entry: autocorr < -0.25

- Exit: autocorr > -0.09 OR 12 hours

- Return: +13.42%

- Sharpe: 0.78

Analysis:

ChatGPT wrote the grid search code in 2 minutes. Optimal parameters found.

But there’s an overfitting risk — optimization on the same data used for testing.

Problem: The LLM didn’t warn about overfitting. You have to add a walk-forward test yourself.

Stage 6: Validation (Walk-Forward Test)

Prompt for Claude:

The optimization found best params: entry=-0.25, exit=-0.09, hours=12.

But this is in-sample optimization (overfitting risk).

Implement walk-forward testing:

1. Split 2 years into 4 periods (6 months each)

2. For each period:

- Optimize on previous period (if exists)

- Test on current period

3. Compare in-sample vs out-of-sample Sharpe

Code must be complete.

Claude’s Response:

import pandas as pd

import numpy as np

# Split data into 6-month periods

periods = []

start_date = data.index[0]

for i in range(4):

end_date = start_date + pd.DateOffset(months=6)

period_data = data[(data.index >= start_date) & (data.index < end_date)]

periods.append(period_data)

start_date = end_date

# Walk-forward test

in_sample_sharpes = []

out_of_sample_sharpes = []

for i in range(1, 4):

# Optimize on previous period

train_data = periods[i-1]

best_params = optimize_on_data(train_data) # returns (entry, exit, hours)

# Test on current period

test_data = periods[i]

capital, trades = run_backtest(test_data, *best_params)

trades_df = pd.DataFrame(trades)

oos_sharpe = trades_df['return'].mean() / trades_df['return'].std() * np.sqrt(len(trades_df))

# Compare

train_sharpe = get_sharpe_on_data(train_data, *best_params)

print(f"Period {i}:")

print(f" In-sample Sharpe: {train_sharpe:.2f}")

print(f" Out-of-sample Sharpe: {oos_sharpe:.2f}")

print(f" Degradation: {train_sharpe - oos_sharpe:.2f}\n")

in_sample_sharpes.append(train_sharpe)

out_of_sample_sharpes.append(oos_sharpe)

print(f"Average degradation: {np.mean(in_sample_sharpes) - np.mean(out_of_sample_sharpes):.2f}")

Running it:

Period 1:

In-sample Sharpe: 0.82

Out-of-sample Sharpe: 0.34

Degradation: 0.48

Period 2:

In-sample Sharpe: 0.76

Out-of-sample Sharpe: 0.41

Degradation: 0.35

Period 3:

In-sample Sharpe: 0.71

Out-of-sample Sharpe: 0.29

Degradation: 0.42

Average degradation: 0.42

Conclusion: Severe overfitting. Out-of-sample Sharpe is 2x lower.

Analysis:

What went well:

- Claude correctly implemented the walk-forward test

- Identified overfitting

What went wrong:

- Didn’t propose a solution (how to reduce overfitting)

Question: Can the LLM suggest methods to combat overfitting?

Stage 7: Fighting Overfitting

Prompt for ChatGPT:

Walk-forward test shows strong overfitting (Sharpe degrades from 0.78 to 0.35).

Suggest 3 methods to reduce overfitting in this autocorrelation strategy.

For each method, explain WHY it helps and provide code modifications.

ChatGPT’s Response:

Method 1: Reduce parameter space

Why: Fewer parameters = less chance to fit noise

Modification:

- Instead of optimizing 3 parameters (entry, exit, hours)

- Fix exit_hours = 12 (based on Claude’s research)

- Only optimize entry_threshold

- This reduces search space from 100 to 10 combinations

Method 2: Use ensemble of parameter sets

Why: Average of multiple parameter sets is more robust than single “best”

Modification:

# Instead of using single best params:

top_5_params = optimization_results.head(5)

# Trade with average signal:

signals = []

for params in top_5_params:

signal = generate_signal(data, params)

signals.append(signal)

final_signal = np.sign(np.mean(signals, axis=0)) # Majority vote

Method 3: Add regularization via minimum trade count

Why: Avoid parameter sets that trade too frequently (likely overfit)

Modification:

# Filter optimization results:

valid_results = results_df[

(results_df['num_trades'] >= 50) &

(results_df['num_trades'] <= 200)

]

Testing Method 2 (Ensemble):

Taking top-5 parameter sets, averaging signals.

Ensemble Results:

Trades: 127

Return: +9.84%

Sharpe: 0.52

Out-of-sample Sharpe: 0.48

Degradation: 0.04 # Much less!

Result: Overfitting has nearly disappeared (degradation 0.04 vs 0.42).

Analysis:

What went well:

- ChatGPT suggested 3 practical methods

- The ensemble approach worked

- Explained WHY each method helps

This is senior quant analyst level work.

Where the LLM Succeeded and Failed: Summary Table

| Task | LLM Result | Rating | Comment |

|---|---|---|---|

| Idea generation | 5 strategies in 30 sec | 5/5 | All logical, testable |

| Research (finding papers) | Found 1 real paper, 1 fake | 3/5 | Needs fact-checking |

| Writing code | Works on first run | 5/5 | Clean code |

| Backtesting | Correct implementation | 5/5 | Accounted for commissions, exit rules |

| Debugging | Correctly identified the problem | 4/5 | But can’t test on its own |

| Optimization | Grid search in 2 minutes | 5/5 | But didn’t warn about overfitting |

| Walk-forward test | Correct implementation | 4/5 | But didn’t suggest a solution |

| Fighting overfitting | 3 methods, 1 worked | 5/5 | Senior-level advice |

| Final return | +9.84%, Sharpe 0.52 | 3/5 | Works, but not impressive |

What the LLM does excellently:

- Code generation — faster and more accurate than a junior dev

- Standard patterns — backtesting, grid search, walk-forward

- Explaining concepts — why ensemble helps, what overfitting is

- Idea generation — dozens of ideas in minutes

What the LLM does poorly:

- Fact-checking — fabricates numbers and references

- Critical thinking — doesn’t warn about overfitting proactively

- Intuition — can’t say “this idea definitely won’t work”

- Iterative testing — can’t run code and verify on its own

- Context understanding — doesn’t account for market regime, macro

Comparison: LLM vs Quant Analyst

What the LLM can already do:

| Task | Quant (8 hours) | LLM (30 minutes) | LLM Quality |

|---|---|---|---|

| Write a backtest | Yes | Yes | 95% |

| Parameter optimization | Yes | Yes | 100% |

| Generate 10 ideas | Yes | Yes | 80% |

| Explain a concept | Yes | Yes | 90% |

Conclusion: For routine tasks, the LLM is faster and cheaper.

What the LLM cannot do (yet):

| Task | Quant | LLM | Why the LLM can’t |

|---|---|---|---|

| Assess whether an idea is worth researching | Yes | No | No intuition |

| Predict market regime change | Yes | No | No macro understanding |

| Find an edge in data | Yes | No | Doesn’t see patterns it wasn’t looking for |

| Decide on deployment | Yes | No | No accountability |

| Explain a loss to management | Yes | No | No communication skills |

Real Use Cases of LLMs by Quants

At the Quant Strats 2025 conference, professionals shared how they use LLMs:

Case 1: Alpha Factor Generation (Goldman Sachs)

Task: Find new alpha factors for equity strategies.

Old process:

- Quant manually comes up with 10-20 factors per month

- Tests each one

- 90% don’t work

With LLM (Alpha-GPT 2.0):

- LLM generates 100+ alpha factors per hour

- Automatically tests each one

- Quant analyzes only the top 10

Result: 5x faster.

Case 2: Sentiment Analysis (JP Morgan)

Task: Analyze earnings calls, news, Twitter for sentiment.

Old process:

- NLP models (BERT, FinBERT)

- Require training on financial data

- Hard to adapt to new sources

With LLM:

prompt = f"""

Analyze this earnings call transcript: {transcript}

Rate on scale 1-10:

1. Management confidence

2. Revenue outlook

3. Cost concerns

4. Competitive position

Provide JSON output.

"""

sentiment = llm.generate(prompt)

Result: Faster, more flexible, no training required.

Case 3: Code Review and Debugging (Citadel)

Task: Review trading strategy code from junior quants.

Old process:

- Senior quant spends 2-3 hours on review

- Finds bugs, suggests improvements

With LLM:

prompt = f"""

You are a senior quant at a hedge fund.

Review this trading strategy code: {code}

Check for:

1. Look-ahead bias

2. Incorrect position sizing

3. Missing commission handling

4. Off-by-one errors in indexing

5. Performance bottlenecks

Provide detailed feedback.

"""

review = llm.generate(prompt)

Result: The LLM finds 70-80% of typical errors in seconds.

Case 4: Documentation Automation (Two Sigma)

Task: Document every strategy (required for compliance).

Old process:

- Quant writes a 10-page report manually

- 4-6 hours of work

With LLM:

prompt = f"""

Generate a strategy documentation based on this code: {code}

Include:

1. Executive summary

2. Mathematical formulation

3. Risk analysis

4. Backtest results interpretation

5. Assumptions and limitations

Format: PDF-ready markdown.

"""

doc = llm.generate(prompt)

Result: Documentation in 30 minutes instead of 6 hours.

Forecast: What Will Happen to Quant Analysts

Scenario 1: Augmentation (most likely)

LLMs won’t replace quants — they’ll augment them.

What it looks like:

- Junior quants: Do the same work but 3-5x faster (thanks to LLMs)

- Senior quants: Focus on strategic thinking, LLMs handle the routine

- New role: “Prompt Engineer” — specialist in effective LLM usage

Analogy: Calculators didn’t replace mathematicians, but they changed how they work.

Scenario 2: Democratization (moderate probability)

LLMs will make quant analysis accessible to non-programmers.

Who gains access:

- Portfolio managers (can test ideas themselves)

- Retail traders (via no-code platforms with LLMs)

- Analysts without programming skills

Consequences:

- Demand for junior quants will drop (their tasks get automated)

- Demand for senior quants will rise (needed to validate LLM-generated strategies)

Scenario 3: Full replacement (low probability)

LLMs learn to do everything quants do.

Problems:

- Regulators won’t approve (need human accountability)

- LLMs hallucinate (critical in finance)

- No “understanding” of context (macro, geopolitics)

Timeline: If it happens at all, not before 2035-2040.

Practical Recommendations for Quants

1. Use LLMs as an assistant

Tasks you can delegate to the LLM:

- Code generation (boilerplate, backtesting loops)

- Initial research (finding papers, summaries)

- Idea generation (brainstorming alpha factors)

- Documentation

- Code review (first pass)

What to keep for yourself:

- Critical thinking

- Final decision

- Accountability for results

2. Learn prompt engineering

A good prompt can increase LLM quality by 3-5x.

Bad prompt:

Create a trading strategy for BTC

Good prompt:

You are a quantitative analyst with 15 years of experience at Renaissance Technologies.

Create a mean-reversion trading strategy for BTC/USDT with the following requirements:

- Timeframe: 1-hour candles

- Holding period: 6-24 hours

- No technical indicators (use statistical properties)

- Include entry/exit rules with specific thresholds

- Provide expected Sharpe ratio and reasoning

Output format:

1. Strategy name

2. Hypothesis (why it should work)

3. Mathematical formulation

4. Entry rules

5. Exit rules

6. Risk management

7. Expected performance

Difference: Generic idea vs detailed specification.

3. Verify everything the LLM generates

LLMs hallucinate especially often in finance.

What to verify:

- Numbers and statistics (ask for the source)

- Academic references (check if they exist)

- Code (run unit tests)

- Logic (look-ahead bias, off-by-one errors)

Rule: Trust, but verify.

4. Combine LLMs with classical methods

The best results come from a hybrid approach:

class HybridQuant:

def __init__(self):

self.llm = LLM()

def generate_ideas(self):

# LLM generates 100 ideas

ideas = self.llm.brainstorm(n=100)

return ideas

def filter_ideas(self, ideas):

# Quant selects top 10 by intuition

return self.manual_review(ideas, n=10)

def implement(self, idea):

# LLM writes the code

code = self.llm.generate_code(idea)

# Quant reviews

validated_code = self.review_code(code)

return validated_code

def backtest(self, code):

# Automatic backtest

results = run_backtest(code)

# Quant interprets

analysis = self.interpret_results(results)

return analysis

Conclusions

Can an LLM replace a quant analyst?

Short answer: No. But it can make one 5x more productive.

What the LLM already does better than a quant:

- Code generation (faster, fewer bugs)

- Brainstorming ideas (100 ideas vs 10)

- Routine tasks (grid search, documentation)

What the LLM cannot do:

- Critical thinking (assessing whether an idea is worth the time)

- Context understanding (macro, market regime)

- Accountability for decisions

- Communication with management

Forecast for 2026-2030:

- Junior quants: Demand will drop (their tasks get automated by LLMs)

- Senior quants: Demand will rise (needed to validate LLMs and for strategic thinking)

- New role: “AI-Augmented Quant” — specialist in effective LLM collaboration

For quants this means:

- Learn prompt engineering

- Use LLMs for routine work

- Focus on what LLMs can’t do (intuition, strategy)

- Verify everything the LLM generates

Upcoming articles:

- Where AI assistance ends and deposit self-destruction begins — “black box” risks

- Experiment: LLM + classical algorithm — can we improve a strategy with AI filters

LLMs won’t replace quants. But quants who don’t use LLMs will be replaced by those who do.

Useful links:

LLMs in quantitative finance:

- Quant Strats 2025: Integrating LLMs

- Automate Strategy Finding with LLM

- Alpha-GPT 2.0 Framework

- LLM for Trading Data Analysis

Practical guides:

- Prompt Engineering for Traders

- Claude Trading Strategy

- ChatGPT and Claude: Data to Backtesting

- LLM Agent Trader in Python

Risks and limitations:

Quant careers:

Discussion

Join the discussion in our Telegram chat!