EU AI Act: What Awaits the Financial Sector in August 2026

The Deadline Is Approaching

August 2, 2026 — the date the European financial sector has circled in red on its calendar. On this day, the core provisions of the EU AI Act (the EU Artificial Intelligence Regulation) governing high-risk systems come into force. And most AI systems in finance fall squarely into this category.

Which Financial AI Systems Are Considered High-Risk

According to Annex III of the EU AI Act, high-risk AI systems include those used for:

- Creditworthiness assessment of individuals

- Credit scoring — determining loan terms

- Pricing of insurance products based on personal data

- Risk assessment in life and health insurance

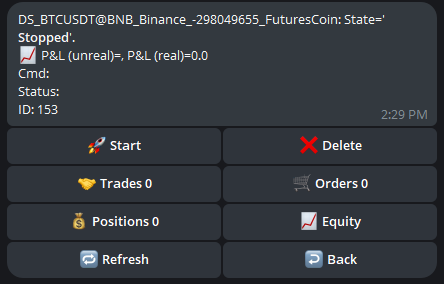

- Automated trading — algorithmic trading systems may fall under financial sector requirements, although the direct inclusion of HFT in Annex III is still debated

- Anti-fraud systems — automated fraud detection

Key Requirements

1. Risk Management System (Article 9)

A continuous risk assessment and management system must be implemented, including:

- Identification and analysis of known and foreseeable risks

- Risk assessment for intended use and foreseeable misuse

- Risk mitigation measures

- System testing

2. Data Quality (Article 10)

Training, validation, and test datasets must:

- Be relevant, representative, and as error-free as possible

- Account for geographic, behavioral, and functional context

- Contain data for detecting bias

3. Technical Documentation (Article 11)

Each system must have detailed documentation covering:

- System description and intended purpose

- Architecture, algorithms, and training process

- Performance metrics and limitations

- Cybersecurity measures

4. Logging (Article 12)

Systems must automatically log:

- Each instance of system use

- Decisions made and their rationale

- Input data and outputs

5. Transparency (Article 13)

Users must understand how the system works:

- Clear usage instructions

- Information about capabilities and limitations

- Accuracy levels and known errors

6. Human Oversight (Article 14)

The system must provide the ability for:

- Full understanding of capabilities and limitations

- Real-time monitoring of operation

- Overriding or shutting down the system by a human

Penalties

| Violation | Fine |

|---|---|

| Use of prohibited AI systems | Up to €35 million or 7% of global turnover |

| Non-compliance with high-risk system requirements | Up to €15 million or 3% of turnover |

| Providing false information | Up to €7.5 million or 1.5% of turnover |

For comparison: maximum GDPR fines are €20 million or 4% of turnover.

Who Needs to Prepare

Banks and Fintech

Any bank using AI for credit scoring, AML checks, or automated decision-making must bring its systems into compliance.

Insurance Companies

AI models for pricing and underwriting require full documentation and auditing.

Investment Funds

Algorithmic trading systems using ML models fall under Articles 9-14 requirements.

Brokers

If a broker offers AI recommendations to clients — that is a high-risk system.

Practical Steps

- Conduct an inventory of all AI systems in your organization

- Classify each one by risk level according to the EU AI Act

- Appoint compliance officers

- Begin documentation — this is the most labor-intensive part

- Implement monitoring and logging systems

- Audit the data used for training

Less than 5 months remain until the deadline. If you haven’t started yet — now is the time.

Discussion

Join the discussion in our Telegram chat!