Open-Source LLMs: Why Open Models Are Winning

Openness Wins

Back in 2023, it seemed like the future of AI belonged to closed models: OpenAI, Anthropic, and Google were pouring billions into proprietary development. But by 2026, the picture has changed dramatically – open models have not only caught up but in a number of tasks have surpassed their closed counterparts.

Key Players

DeepSeek (China)

DeepSeek V3 and R1 sent shockwaves through the industry:

- Quality comparable to GPT-5 at 10x lower training cost

- Fully open weights (Apache 2.0)

- Innovative MoE (Mixture of Experts) architecture

- API available for free for researchers

Meta Llama 4 (US)

Meta continues its openness strategy:

- Llama 4 Scout – 109B parameters, best in class

- Llama 4 Maverick – 400B+ parameters, GPT-5 competitor

- License allows commercial use

- Huge community and fine-tune model ecosystem

Qwen 3 (Alibaba, China)

Alibaba Cloud is actively developing the Qwen family:

- Excellent Chinese and other Asian language support

- Models from 0.5B to 72B parameters

- Multimodal versions (text + images + audio)

- Apache 2.0 license

Mistral Large 3 (France)

European leader Mistral AI:

- Mistral Large 3 – GPT-4o quality competitor

- Focus on European languages and EU AI Act compliance

- License with commercial use

- Efficient architecture for deployment on consumer hardware

Why Open Models Are Winning

1. Algorithmic Efficiency Matters More Than Data

DeepSeek proved that smart algorithms can compensate for less compute. Their model was trained for $5.6 million – tens of times cheaper than GPT-5.

2. Community Accelerates Development

An open model benefits from contributions by thousands of researchers and developers:

- Fine-tuning for specific tasks

- Optimization for different hardware

- Discovery and fixing of issues

- Creation of tools and libraries

3. Control and Security

Organizations prefer open models because they can:

- Run them on their own servers – data never leaves the perimeter

- Audit the model – know how it makes decisions

- Customize – adapt to their needs

- Avoid dependence on a single provider’s pricing

4. Regulatory Pressure

The EU AI Act and other regulatory frameworks require transparency in AI systems. Compliance is easier with an open model.

Benchmarks: Open vs Closed

| Benchmark | Best Open | Best Closed | Gap |

|---|---|---|---|

| MMLU | DeepSeek V3 (89.5%) | Claude Opus 4.6 (91.2%) | 1.7% |

| HumanEval | Llama 4 Maverick (92.1%) | Claude Sonnet 4.6 (96.2%) | 4.1% |

| MATH-500 | DeepSeek R1 (95.2%) | o3 (97.8%) | 2.6% |

| MT-Bench | Qwen 3 72B (9.1) | GPT-5 (9.4) | 0.3 |

The gap is narrowing every quarter. By the end of 2026, open models are projected to fully close the gap with closed ones.

Practical Recommendations

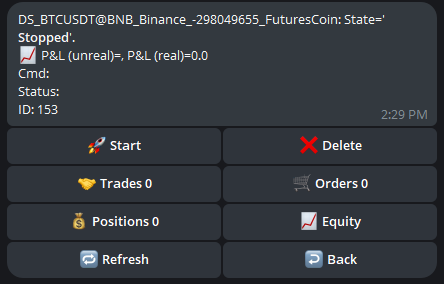

For algo traders and trading system developers:

- Start with open models – DeepSeek V3 and Llama 4 are free

- Use fine-tuning – adapt the model to the financial domain

- Local inference – vLLM, llama.cpp, Ollama let you run models locally

- Combine – use open models for bulk tasks, closed ones for mission-critical work

The future of AI is open. And that is good news for everyone.

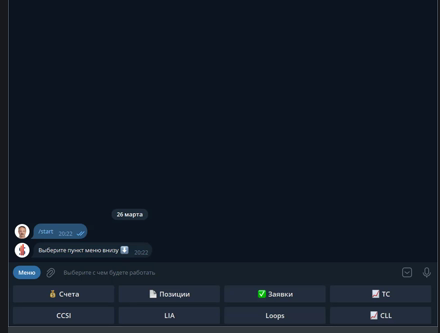

Discussion

Join the discussion in our Telegram chat!