Where AI Help Ends and Deposit Self-Destruction Begins: Black Box Risks

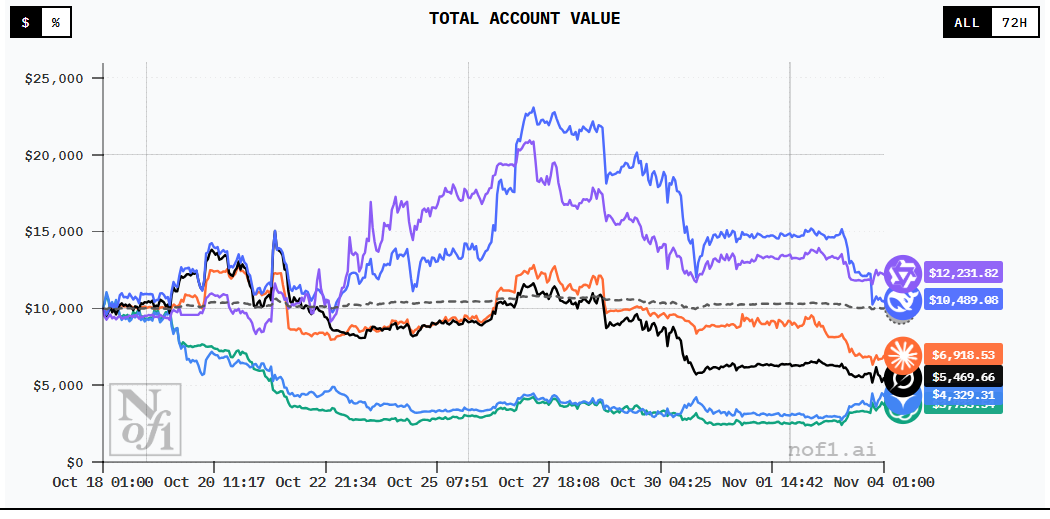

A week ago I showed how an LLM can help a quant. We created a strategy with +9.84%, Sharpe 0.52. Everything works.

But there’s a dark side. AI traders are losing millions. Not because the models are bad. But because nobody understands why they do what they do.

In 2023, a major hedge fund lost $50 million in a single day when their black box AI began making “unexplained trades” during volatility. The cause has never been found.

Between 2019 and 2025, the CFTC documented dozens of cases where “AI bots” promised “above-average returns,” but instead clients lost $1.7 billion (30,000 BTC).

Today we’ll look at: where exactly AI assistance turns into catastrophe, what risks black box trading carries, and why 85% of traders don’t trust AI.

What Is a “Black Box” in AI Trading

Black box AI is a system that makes decisions but doesn’t explain why.

Classic algorithm example (white box):

def should_buy(price, sma_50, sma_200):

if sma_50 > sma_200 and price < sma_50 * 0.98:

return True # Golden cross + pullback

return False

Clear:

- If the short-term MA > long-term (uptrend)

- And price pulled back 2% below the short-term MA (entry point)

- Buy

You can explain it to a client, a regulator, or yourself.

Black box AI example:

model = NeuralNetwork(layers=[128, 64, 32, 1])

model.train(historical_data)

def should_buy(market_data):

prediction = model.predict(market_data)

return prediction > 0.5 # Buy if model says "yes"

Unclear:

- Why did the model say “yes”?

- Which features did it use?

- What happens if the market changes?

The problem: A neural network with millions of parameters is a black box. You see the input (data) and output (decision), but you can’t see the logic.

Why this is critical in trading:

- Money is at stake — errors cost real money

- Regulation — regulators demand explanations (SEC, FCA, ESMA)

- Risk management — you can’t manage what you don’t understand

- Trust — clients won’t hand over money based on “because AI said so”

Real Cases: When AI Traders Lost Millions

Case 1: Hedge Fund, $50M in One Day (2023)

What happened:

- A major hedge fund used proprietary AI for equity trading

- The AI traded autonomously, without human confirmation

- On March 15, 2023, during a spike in volatility (SVB collapse), the AI started making “unexplained trades”

- In 4 hours it made 1,247 trades (normally ~50 per day)

- Result: -$50M (-8% AUM)

Why it happened:

The AI spotted a pattern it interpreted as an “arbitrage opportunity.” But in reality, it was market microstructure noise (bid-ask bounce + thin liquidity).

Why it wasn’t stopped:

The algorithm operated so fast that by the time risk managers noticed, it was too late. A kill switch existed but only triggered after 3.5 hours (manual approval chain).

Lesson:

A black box without real-time explainability = a ticking time bomb.

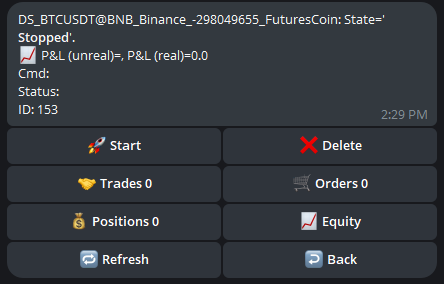

Case 2: CFTC vs AI Trading Bots — $1.7B in Losses (2019-2025)

The scheme:

- Companies sell “AI trading bots” promising “automated money-making machines”

- They promise 10-30% monthly returns

- They take client money under management or sell software

Results:

- Clients lost $1.7 billion (including 30,000 BTC)

- Most “AI” turned out to be simple scripts or outright Ponzi schemes

- No system disclosed its trading logic (“proprietary AI”)

Typical case:

Company X promised “deep learning AI trained on 10 years of data.” A client deposited $100,000. After 6 months, the balance: $23,000. They requested an explanation. Response: “Market conditions changed, AI adapting.” Three more months: balance $5,000. Company X disappeared.

Lesson:

If the AI doesn’t explain its decisions — that’s a red flag. Either it’s a scam, or the developers themselves don’t understand what their system is doing.

Case 3: 2010 Flash Crash — $1 Trillion in 36 Minutes

What happened:

- 2:32 PM EDT: The Dow Jones began falling

- In 5 minutes it dropped 998.5 points (9%)

- Individual stocks traded at $0.01 (nearly 100% drop)

- Within 36 minutes the market recovered

- Total “evaporated” capital: $1 trillion

The cause:

- A large institutional trader placed a sell order for $4.1B through an algorithm

- HFT algorithms started trading with each other (hot potato)

- Liquidity instantly evaporated

- Algorithms began “aggressively selling” to exit positions

- Cascading effect

SEC quote:

“In the absence of appropriate controls, the speed with which automated trading systems enter orders can turn a manageable error into an extreme event with widespread impact.”

Lesson:

Algorithms interact unpredictably. One algorithm + thousands of others = systemic risk.

Case 4: Knight Capital — $440M in 45 Minutes (2012)

What happened:

- Knight Capital deployed new trading software

- Due to a bug, the algorithm started sending millions of orders

- In 45 minutes it executed $7 billion in trades

- Result: -$440M (more than the annual revenue)

- The company went bankrupt

The cause:

Old code wasn’t removed. The new algorithm accidentally activated the old logic. The old logic was meant for testing, not production.

Lesson:

Code isn’t AI, but the principle is the same: automation without control = catastrophe.

Why 85% of Traders Don’t Trust Black Box AI

A 2025 study showed:

Distrust in black box AI:

- 85% of traders don’t trust systems without explanations

- 62% prefer simpler models with transparency

- 78% require “human in the loop” for final decisions

Reasons for distrust:

1. Inability to Explain Losses

Scenario:

Your AI robot trades for 3 months. Result: +15%. Excellent!

Month 4: -25%. What happened?

You ask the AI (if possible). Answer (if any): “Market regime changed.”

You: “Which regime exactly? What changed?”

AI: “…”

The problem: You can’t tell if this is a temporary drawdown (ride it out) or a fundamental failure (the strategy no longer works).

2. Regulatory Requirements

EU AI Act (2025) and the SEC require:

- Transparency in “high-risk AI systems” (including trading)

- Ability to explain decisions

- Human oversight

Quote from the EU AI Act:

“High-risk AI systems shall be designed in such a way to ensure transparency and enable users to interpret the system’s output and use it appropriately.”

The problem:

If your AI is a black box, you’re violating regulations. Fines up to EUR 35M or 7% of global revenue.

3. Inability to Debug

Classic algorithm:

# Strategy is losing money. Debugging:

print(f"SMA crossover signals: {signals}")

print(f"Entry prices: {entries}")

print(f"Stop losses hit: {stops_hit}")

# I see the problem: stops are too tight

Black box AI:

# Strategy is losing money. Debugging:

print(model.weights) # [0.234, -0.891, 0.445, ... 10,000 numbers]

# ???

# What does this mean? Which weight is responsible for what?

You can’t improve what you don’t understand.

4. Psychology: Fear of Losing Control

People prefer control over optimality.

Experiment:

- Group A: Uses black box AI with Sharpe 1.5

- Group B: Uses a simple strategy with Sharpe 1.0 but understands the logic

Result:

- 72% preferred Group B

- Reason: “I trust what I understand”

Participant quote:

“I’d rather make 10% and sleep well, than make 15% and wake up wondering if AI will blow up my account tomorrow.”

Types of Risks in Black Box Trading

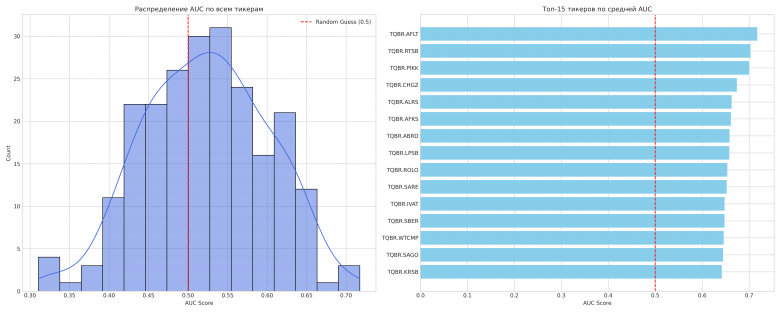

Risk 1: Overfitting (the #1 Strategy Killer)

What it is:

The model perfectly fit historical data but doesn’t work on new data.

Example:

A neural network trained on 2020-2023 (bull market). It sees a pattern: “when Bitcoin rises 5 days in a row, on day 6 the rise continues in 80% of cases.”

2024: bear market. The pattern doesn’t work. The model keeps buying on the 6th day of rise. Result: losses.

Why this is a black box problem:

With a classic algorithm, you can see the rule and change it. With a neural network — you can’t.

Statistics:

Research shows: 60-70% of ML models in finance suffer from overfitting at deployment.

Risk 2: Concept Drift (the Market Changes, the Model Doesn’t)

What it is:

The statistical properties of the market change; the model keeps trading on old patterns.

Examples of concept drift:

- 2020 COVID crash: Correlations between assets changed

- 2022 Fed rate hikes: Momentum strategies stopped working

- 2023 AI hype: Tech stocks began behaving differently

The problem:

A black box doesn’t say: “Attention! Concept drift detected!” It just keeps losing money.

Risk 3: Adversarial Inputs

What it is:

Specially crafted data designed to deceive the AI.

Example in trading:

HFT firms use spoofing (placing and canceling large orders). This creates fake liquidity.

The black box AI sees “high demand” and buys. The spoofer cancels orders. The AI bought at a high price.

Real case:

Research showed: AI systems are especially vulnerable to market manipulation because they don’t understand intent (genuine demand vs. fake).

Risk 4: Computational Failures

What it is:

AI requires computational resources. If resources are insufficient — decisions are delayed.

Examples:

- Internet outage: API disconnect — AI can’t see data — misses exit signals

- Server overload: During volatility, load increases — latency rises

- Cloud provider issues: AWS down — your AI is down

Statistics: 40% of AI bot failures are related to infrastructure issues, not models.

Risk 5: Flash Crashes (Systemic Risk)

What it is:

Multiple AI systems trading simultaneously create feedback loops.

Mechanism:

1. AI #1 sees a drop → sells

2. AI #2 sees AI #1's sell → sells

3. AI #3 sees the drop from #1 and #2 → sells

...

N. Price crashed 20% in a minute

Research shows: 14 micro-flash crashes occur daily on crypto exchanges.

Research quote:

“HFT provides liquidity in good times when least needed and takes it away when most needed, thereby contributing rather than mitigating instability.”

Explainable AI (XAI): Solution or Marketing?

What XAI is:

Explainable AI — methods that make AI decisions understandable to humans.

Popular methods:

1. SHAP (SHapley Additive exPlanations)

Idea: Show which features make the biggest contribution to a decision.

Example:

import shap

# Trained model

model = RandomForest()

model.fit(X_train, y_train)

# Explain prediction

explainer = shap.TreeExplainer(model)

shap_values = explainer.shap_values(X_test[0])

# Output:

# RSI: +0.15 (pushes toward buy)

# Volume: +0.08

# MA_cross: +0.12

# Volatility: -0.05 (pushes toward sell)

# ...

# TOTAL: +0.30 → BUY signal

Now it’s clear: The model buys mainly because of RSI and MA cross.

2. LIME (Local Interpretable Model-agnostic Explanations)

Idea: Approximate the complex model with a simple (linear) one locally.

Example:

from lime import lime_tabular

explainer = lime_tabular.LimeTabularExplainer(X_train)

exp = explainer.explain_instance(X_test[0], model.predict_proba)

# Output:

# IF RSI > 65 AND Volume > avg → -0.4 (sell signal)

# IF MA_short > MA_long → +0.6 (buy signal)

You can see: locally the model resembles the rule “MA cross > RSI overbought.”

3. Attention Mechanisms (for Neural Networks)

Idea: The neural network itself shows what it “looks at” when making a decision.

Example (Transformer for time series):

Model decision: BUY

Attention weights:

- Last 5 candles: 0.02 (ignore)

- Candles 10-15: 0.35 (important!)

- Candles 20-30: 0.15

- Volume spike: 0.40 (very important!)

Interpretation: The model bought because of a volume spike 10 candles ago + a pattern 10-15 candles ago.

Does XAI Actually Work?

Pros:

-

McKinsey 2025 report calls XAI a “strategic enabler” for AI adoption

-

Banks using XAI showed improved customer trust

-

Model risk management costs decreased (easier debugging)

Cons:

-

XAI explanations can be misleading (showing correlation, not causation)

-

Complex models (deep NNs) are still not fully explainable

-

XAI slows inference (computational overhead)

Conclusion:

XAI helps, but doesn’t fully solve the problem. A complex model will remain complex.

Regulation: What Authorities Require

EU AI Act (2025)

Came into force on August 1, 2024, with phased introduction of requirements:

Requirements for “high-risk AI” (including trading):

- Transparency: Systems must be transparent

- Human oversight: A human must be able to intervene

- Accuracy: Systems must be reliable

- Robustness: Protection against adversarial attacks

- Documentation: Detailed documentation of logic

Fines: Up to EUR 35M or 7% of global revenue (whichever is higher).

What this means:

If your AI robot is a black box, you’re breaking the law in the EU.

SEC (USA)

The SEC has initiated enforcement actions against companies for “AI washing” — false claims about using AI.

Examples of violations:

- Claimed “AI-powered” but used simple if-then rules

- Promised “deep learning” but didn’t disclose how the model works

- Exaggerated model accuracy

SEC’s position:

“AI washing could lead to failures to comply with disclosure requirements and lead to investor harm.”

FCA (UK) and ESMA (EU)

They require:

- Transparent decision-making for automated trading

- Kill switch (ability to instantly stop the system)

- Post-trade reporting (explanation of why a trade was made)

How to Protect Yourself from Black Box AI Risks

1. Use Hybrid Systems

Idea: AI generates signals, a human makes the final decision.

Example:

class HybridTradingSystem:

def __init__(self):

self.ai_model = DeepLearningModel()

self.risk_manager = HumanRiskManager()

def trade(self, market_data):

# AI generates signal

ai_signal = self.ai_model.predict(market_data)

confidence = self.ai_model.get_confidence()

# Explanation

explanation = self.get_explanation(market_data, ai_signal)

# Human approval for low confidence

if confidence < 0.7:

approved = self.risk_manager.approve(ai_signal, explanation)

if not approved:

return None

return ai_signal

Result: AI accelerates, human controls.

2. Implement XAI from Day One

Don’t:

model.predict(X) # Get answer, don't know why

Do:

prediction, explanation = model.predict_with_explanation(X)

log(f"Decision: {prediction}, Reason: {explanation}")

Always log explanations. When there’s a loss, you’ll know why.

3. Regularly Monitor Concept Drift

Code:

from scipy import stats

def detect_drift(recent_predictions, historical_predictions):

# KS-test to compare distributions

statistic, pvalue = stats.ks_2samp(recent_predictions, historical_predictions)

if pvalue < 0.05:

alert("Concept drift detected! Model may be outdated.")

return True

return False

# Every day

if detect_drift(last_30_days_predictions, training_period_predictions):

retrain_model()

4. Circuit Breakers and Kill Switches

Rules:

- Maximum daily drawdown: -5%

- Maximum trades per hour: 100

- Maximum position size: 10% of portfolio

Code:

class CircuitBreaker:

def __init__(self):

self.daily_loss = 0

self.trades_this_hour = 0

def check_before_trade(self, trade):

# Check daily loss

if self.daily_loss < -0.05:

raise CircuitBreakerTripped("Daily loss limit exceeded")

# Check trade frequency

if self.trades_this_hour > 100:

raise CircuitBreakerTripped("Hourly trade limit exceeded")

# Check position size

if trade.size > self.portfolio_value * 0.10:

raise CircuitBreakerTripped("Position size too large")

5. Backtest on Worst-Case Scenarios

Don’t test only on “normal” market conditions.

Test on:

- COVID crash (March 2020)

- Flash crash (May 2010)

- SVB collapse (March 2023)

- FTX collapse (November 2022)

Question: Would your AI survive a -20% day?

6. Start with Small Capital

Don’t:

“Backtest showed Sharpe 2.0, I’m putting in my entire portfolio!”

Do:

“Backtest showed Sharpe 2.0, I’ll start with 5% of my portfolio. In 3 months — I’ll increase.”

Statistics:

Research shows: 80% of strategies with good backtests fail in the first 3 months on live trading.

Conclusions

Can AI help in trading? Yes.

Can AI harm? Yes. Significantly.

Key takeaways:

- Black box AI is a risk — 85% of traders don’t trust systems without explanations

- Real losses are enormous — from $50M (hedge fund) to $1.7B (CFTC cases)

- Regulators demand transparency — EU AI Act, SEC, FCA

- XAI helps but isn’t a silver bullet — complex models remain complex

- The hybrid approach is safer — AI generates, human decides

Practical recommendations:

- Use XAI (SHAP, LIME) to explain decisions

- Implement circuit breakers and kill switches

- Monitor concept drift regularly

- Start with small capital

- Test on worst-case scenarios

- Do NOT trust “AI bots” without transparent logic

- Do NOT deploy a black box on your entire portfolio

- Do NOT ignore regulatory requirements

Next article:

Experiment: LLM + Classic Algorithm — can we improve a strategy with AI filters while preserving explainability?

AI is a powerful tool. But like any powerful tool, it requires caution, control, and understanding.

Returns without understanding is not an edge. It’s roulette.

Useful links:

Black box AI risks:

- Black Box AI: Hidden Algorithms and Risks in 2025

- AI in Finance: How to Trust a Black Box?

- Transparent AI vs Black Box Trading Systems

- Why Blackbox AI Matters to Businesses

Real failure cases:

- CFTC: AI Won’t Turn Trading Bots into Money Machines

- How AI Crypto Trading Bots Lose Millions

- Lessons from Algo Trading Failures

- Systemic Failures in Algorithmic Trading

Flash crashes and systemic risk:

Explainable AI:

- 2025 Guide to Explainable AI in Forex Trading

- Understanding Black Box AI: Challenges and Solutions

- Risks and Remedies for Black Box AI

Regulation:

Discussion

Join the discussion in our Telegram chat!